Maximizing Iron Unit Yield from Ore to Liquid Steel (Part 1 – Ore Selection)

This series is based on a paper titled, “Getting the Most from Raw Materials – Iron Unit Yield from Ore to Liquid Steel via the Direct Reduction/EAF Route” by Christopher Manning, PhD, Materials Processing Solutions, Inc. and Vincent Chevrier, PhD, Midrex Technologies, Inc. and articles previously published in Direct From Midrex.

Beginning with this issue, DFM will present a three-part series on getting the most from raw materials, focusing on the four interrelated factors that influence iron unit yield via the DR/EAF route:

- Ore selection (Part 1)

- DRI physical properties (Part 2)

- DRI handling and storage (Part 2)

- Melting practice (Part 3)

INTRODUCTION

Iron units represent the largest operating expense of a direct reduction plant, and metallic iron units represent the largest operating expense of an EAF steelmaking plant. Increasing or decreasing yield by a few percentage points can have a greater impact on total operating cost than a 50% swing in energy cost.

Yield is king when evaluating cost at the metallic iron unit stage, as well as at the liquid metal stage. However, iron unit yield from ore to liquid steel can vary over a wide range. Metallic iron unit losses can add up to greater than 15% during handling, storing, and melting of direct reduced iron (DRI).

Large costs associated with iron unit losses are sometimes hidden, as they can be distributed across several unit operations. These costs must be controlled to remain competitive in today’s iron and steel market, which is why it is essential to be mindful of the factors that influence yield: ore selection, DRI physical properties, handling and storage, and melting practice.

Traditional integrated blast furnace/BOF operations have had over 100 years to develop procedures, technologies, and methods for maximizing iron unit yield by minimizing, capturing, and recycling oxide wastes at every stage of the process. In contrast, commercial steel production via the direct reduction/EAF route has been around only for less than 60 years and still has many opportunities for improvement, such as maximizing net iron unit yield from ore to liquid steel.

PART 1 – ORE SELECTION

Ore chemistry and oxide mechanical properties both influence yield through to liquid steel. An ore with the optimum chemistry may not have the optimum mechanical properties for producing DRI. The ore that leads to maximum yield likely will be a compromise of oxide pellet chemical and mechanical properties. Lump ore also can be reduced in gas-based direct reduction shaft furnaces, but the availability of suitable ores (especially ones with very high Fe content) is becoming more and more limited. However, lump ore can cause challenges in the shaft furnace and material handling system. Therefore, pelletized ore currently is the primary feedstock for shaft furnace direct reduction operations around the world. Iron ore specifications for direct reduction use should be determined by the overall economics of the DR plant and the associated steel mill. If it becomes necessary to alter the specifications, the resulting impact on the cost of steel production must be considered.

CHEMICAL CHARACTERISTICS

Specifications for the chemical composition of iron ore feed-stocks are usually dictated by the intended user of the DRI, rather than the direct reduction process, because the only major chemical change to the iron ore in the direct reduction process is the removal of oxygen – no melting nor refining. As a result, most of the impurities and gangue in the oxide feed are present in the DRI product. Therefore, the iron content of the feed materials should be as high as possible and the gangue content (especially acid gangue constituents, like silica and alumina) as low as possible. The total amount of gangue in oxide pellets and lump ores generally should not exceed 3-4%. Excessive gangue will require additional electric power in the EAF and increased refractory wear. It should be noted that the removal of oxygen from the iron oxide pellets and lump ores will cause an apparent increase in the percentage of both the iron and the impurities, although the relative amount of each remains constant.

The following chemical constituents should be considered when selecting an iron ore for direct reduction use:

- Total iron

- Silica & alumina (acid gangue)

- Lime & magnesia (basic gangue)

- Phosphorous

- Sulfur

- Copper

- Titania

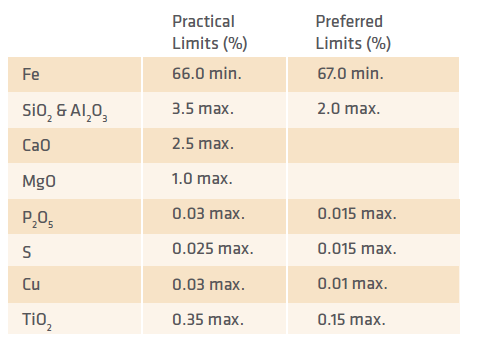

TABLE I. Iron Oxide Chemical Quality Limits

TABLE I shows the maximum practical chemical quality for oxide materials, as well as the preferred limits for producing DRI best suited for EAF steelmaking.

PHYSICAL CHARACTERISTICS

The physical attributes of iron ores often are more important to a direct reduction process than the chemical characteristics. A preferred DR feedstock will be of consistent size to allow homogeneous feeding, have good reducibility characteristics, and sufficient mechanical strength to prevent degradation and fines generation during handling, transport, and melting. These characteristics are determined by screen analysis, tumble test, and the measurement of compression strength.

The mechanical properties of the oxide, such as crush strength or drop strength, impact the overall yield of the DR plant, as oxide fines/dust can be generated during handling and storage. Most of the handling and storage concepts for oxides also apply for DRI.

The following physical characteristics should be considered when selecting an iron ore for direct reduction use:

- Size – About 95% of pellets should be in the size range of 9-16 mm. Lump ores should have a size range of 10-35 mm, with 85% within the range. The -3mm fraction should be minimized.

- Mechanical Strength – Tumble strength for pellets should be 90-95% +6.73 mm. For lump ores it should be 85-90% +6.73 mm. Cold compression strength for pellets should be 250 kg or greater. Tumble strength and cold compression are indications of how well the oxide pellets have been indurated. Low tumble and cold compression strengths mean higher fines generation during handling.

- Bulk Density – Low bulk density means a reduction in unit weight or capacity of a hopper or other volumetric devices, such as the reduction furnace. Pellets and lump ores should have a bulk density of at least 2.2 t/m3.

REDUCTION CHARACTERISTICS

Reducibility can be gauged by the residence time in the reduction zone required to reach a certain degree (%) of metallization at a certain temperature. The reduction furnace in a MIDREX® Plant is sized for 4-6 hours of effective burden residence in the reduction zone. Most oxide pellets and lump ores used for direct reduction have adequate reducibility within a 4-hour range at reduction temperatures below the fusion temperature. This means there is a direct relation between reduction temperature, reducibility, and productivity (the higher the reduction temperature, the higher the reducibility and the productivity). However, the reduction temperature is limited by the point of agglomeration (fusion temperature) inside the reduction furnace. The agglomeration tendency (percentage of pellets sticking together in clusters during reduction) can be improved by coating the pellets to control the basicity (CaO + MgO/SiO2 + Al2O3). If a particular pellet has a higher clustering tendency, it often can be overcome by blending in lump ore. Most lump ores act as lubricants to the furnace burden, preventing clustering due to their tendency to decrepitate.

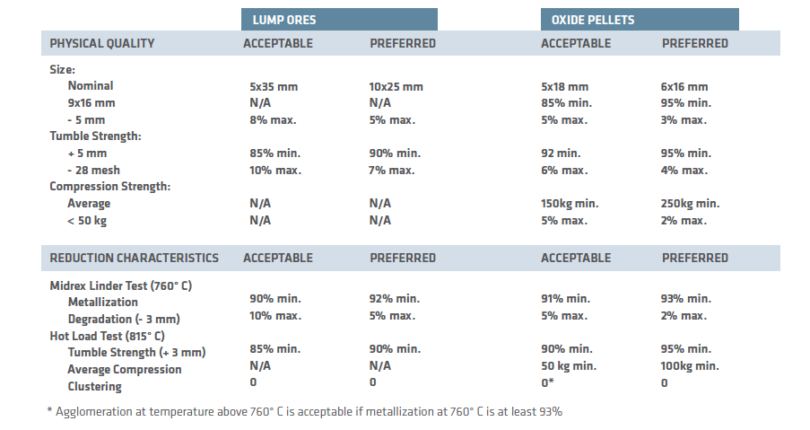

TABLE II shows the desired physical and reduction characteristics for lump ores and oxide pellets used in direct reduction applications.

In direct reduction, thermal fragmentation will occur first as the iron ore heats followed by reduction fragmentation as the ore starts to reduce from hematite to magnetite. Both occur during the first 30 minutes of reduction. Fines produced by frag-mentation are mostly recoverable as metallized fines, which can be briquetted for subsequent use by the EAF steelmaker. Good quality oxide pellets generally experience very low reduction fragmentation.

Most lump ores are subject to thermal fragmentation, which occurs when heating the ore in a temperature range of 375-425° C. The rate of heating does not seem to be important. However, when the ore reaches the temperature range, some of the ore disintegrates into fragments. Lump ores with low reduction fragmentation will generate 3-4% -4 mm fines, while ores with high reduction fragmentation can yield up to 15% -4 mm fines.

TABLE II.

Iron Oxide Physical Quality & Reduction Characteristics

OPTIMUM OXIDE PELLET COMPOSITION

A great deal of laboratory-scale research and plant-level experimentation has been conducted to determine the optimum composition of a DR-grade oxide pellet. The answer depends on specific plant conditions and the iron ore market. In the current market, DR-grade pellets are recognized as a top-tier quality pellet and command a significant price premium.

A great deal of laboratory-scale research and plant-level experimentation has been conducted to determine the optimum composition of a DR-grade oxide pellet. The answer depends on specific plant conditions and the iron ore market. In the current market, DR-grade pellets are recognized as a top-tier quality pellet and command a significant price premium.

Several key factors distinguish a DR-grade pellet from a typical blast furnace-grade pellet. Generally, the Fe content of DR-grade pellets is 67% or better, whereas blast furnace-grade pellets are typically 65% Fe or lower. The high iron content is important to minimize gangue, especially acidic components like SiO2 and Al2O3 in the DRI product (cold DRI, CDRI; hot DRI, HDRI; and hot briquetted iron, HBI). Because the majority of DRI is melted directly in an oxidizing steelmaking furnace, higher acid gangue will lead to a larger slag volume in the steelmaking furnace and higher iron losses to the high FeO slag. This is less of a concern for HBI when it is used in a blast furnace under highly reducing conditions. However, the higher Fe content may be needed to meet the density requirement for maritime transport.

In addition to total iron in the pellet, its reducibility also impacts the overall yield. Several factors influence the hot reduction behavior of the oxide pellet in the direct reduction shaft furnace. The specific mineralogy of the ore, as well as the fluxed basicity of the pellet (ratio of basic-to-acid components) will determine the degree of reduction that can be achieved. Lab testing often is the best way to evaluate the reducibility of a given pellet.

In addition to hot reducibility, the oxide pellet chemistry will determine the tendency of the pellet to stick and form clusters in the shaft furnace. Upset conditions in the shaft furnace can result in significant iron unit loss to non-prime product, which may or may not be suitable for recycling through the shaft furnace.

Finally, the sulfur and phosphorus content of the ore can have an indirect impact on the iron unit yield. Higher S and/or P level in the DRI may require different slag practices in the EAF to remove the contaminants. A higher basicity slag will result in a larger slag volume and thus larger FeO loss to the slag. This will be discussed in more detail in Part 3 – Melting Practice of this series of articles.

CONCLUSION

Knowing the operating characteristics of direct reduction-grade ores can prove invaluable in optimizing a plant’s performance and controlling its operating costs. Iron ore purchases represent up to 2/3 of total operating cost; therefore, iron ore evaluation and selection are extremely important to the operational and financial health and longevity of a direct reduction plant.

Reduction characteristics, such as reducibility, agglomeration tendency, and degradation during reduction should be included in a raw materials specification. However, there is no internally accepted test procedures to evaluate these properties for direct reduction applications. Therefore, it is very difficult to make ore suppliers accept these properties in specifications, especially if penalties are involved.

In addition, not all iron oxide raw materials possess all of the desired chemical, physical, and reduction characteristics. Therefore, blends of different materials, especially combinations of good quality pellets and lump ores, often are used by direct reduction plants. The advantages and disadvantages of each type of oxide pellet or lump ore must be considered to determine which combination will provide the lowest operating cost while maximizing production and maintaining product quality.